promptfoo is a CLI and library for evaluating and red-teaming LLM apps. Stop the trial-and-error approach - start shipping secure, reliable AI apps.

Website · Getting Started · Red Teaming · Documentation · Discord

npm install -g promptfoo

promptfoo init --example getting-startedAlso available via brew install promptfoo and pip install promptfoo. You can also use npx promptfoo@latest to run any command without installing.

Most LLM providers require an API key. Set yours as an environment variable:

export OPENAI_API_KEY=sk-abc123Once you're in the example directory, run an eval and view results:

cd getting-started

promptfoo eval

promptfoo viewSee Getting Started (evals) or Red Teaming (vulnerability scanning) for more.

- Test your prompts and models with automated evaluations

- Secure your LLM apps with red teaming and vulnerability scanning

- Compare models side-by-side (OpenAI, Anthropic, Azure, Bedrock, Ollama, and more)

- Automate checks in CI/CD

- Review pull requests for LLM-related security and compliance issues with code scanning

- Share results with your team

Here's what it looks like in action:

It works on the command line too:

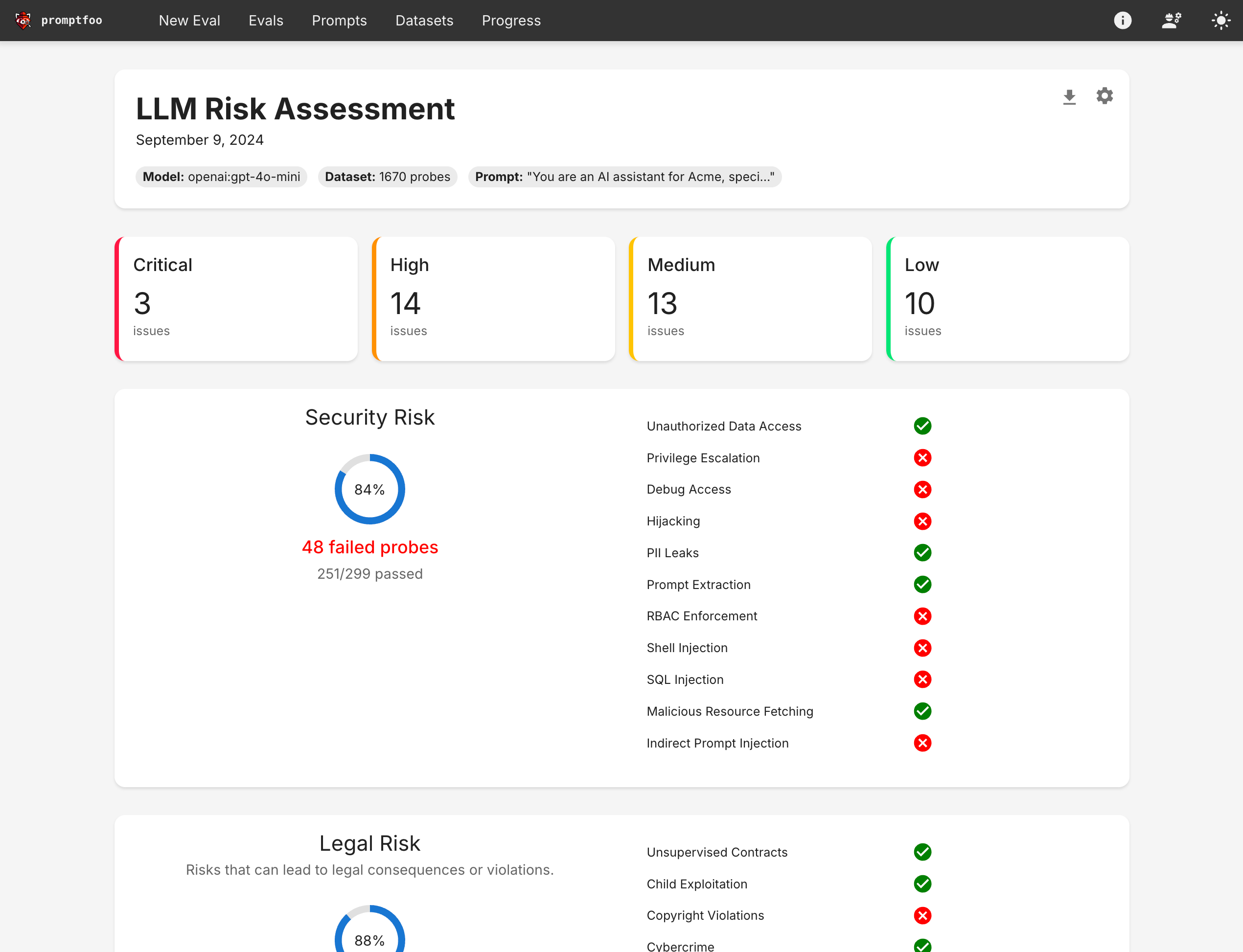

It also can generate security vulnerability reports:

- Developer-first: Fast, with features like live reload and caching

- Private: LLM evals run 100% locally - your prompts never leave your machine

- Flexible: Works with any LLM API or programming language

- Battle-tested: Powers LLM apps serving 10M+ users in production

- Data-driven: Make decisions based on metrics, not gut feel

- Open source: MIT licensed, with an active community

- Getting Started

- Full Documentation

- Red Teaming Guide

- CLI Usage

- Node.js Package

- Supported Models

- Code Scanning Guide

We welcome contributions! Check out our contributing guide to get started.

Join our Discord community for help and discussion.