This repository is the official implementation of the paper:

Panoptic-Depth Forecasting

Juana Valeria Hurtado*, Riya Mohan*, and Abhinav Valada.

IEEE International Conference on Robotics and Automation (ICRA), 2025

If you find our work useful, please consider citing our paper:

@inproceedings{hurtado2025panoptic,

title={Panoptic-depth forecasting},

author={Hurtado, Juana Valeria and Mohan, Riya and Valada, Abhinav},

booktitle={2025 IEEE International Conference on Robotics and Automation (ICRA)},

pages={01--07},

year={2025},

organization={IEEE}

}

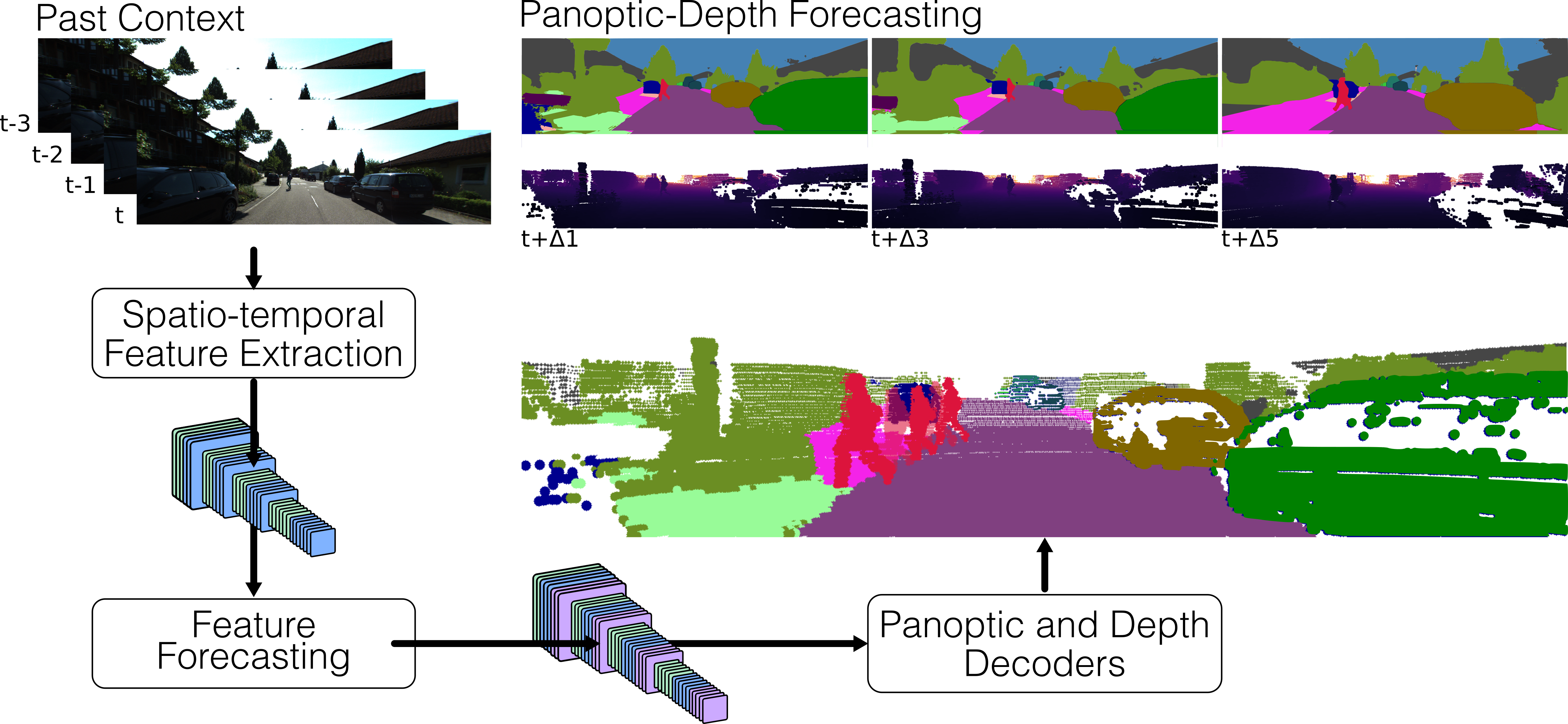

Forecasting the semantics and 3D structure of scenes is essential for robots to navigate and plan actions safely. Recent methods have explored semantic and panoptic scene forecasting; however, they do not consider the geometry of the scene. In this work, we propose the panoptic-depth forecasting task for jointly predicting the panoptic segmentation and depth maps of unobserved future frames, from monocular camera images. To facilitate this work, we extend the popular KITTI-360 and Cityscapes benchmarks by computing depth maps from LiDAR point clouds and leveraging sequential labeled data. We also introduce a suitable evaluation metric that quantifies both the panoptic quality and depth estimation accuracy of forecasts in a coherent manner. Furthermore, we present two baselines and propose the novel PDcast architecture that learns rich spatio-temporal representations by incorporating a transformer- based encoder, a forecasting module, and task-specific decoders to predict future panoptic-depth outputs. Extensive evaluations demonstrate the effectiveness of PDcast across two datasets and three forecasting tasks, consistently addressing the primary challenges.

- Linux

- Python 3.8

- PyTorch 1.12.1

- CUDA 11.3

- GCC 7 or higher

IMPORTANT NOTE: These requirements are not necessarily mandatory. However, we have only tested the code under the above settings and cannot provide support for other setups.

-

Create conda environment from file:

conda env create -f environment.yml

-

Activate environment:

conda activate pdcast

Download the following files from the KITTI-360 website:

- Perspective Images for Train & Val (128G): You can remove "01" in line 12 in

download_2d_perspective.shto only download the relevant images. - Test Semantic (1.5G)

- Semantics (1.8G)

- Calibrations (3K)

Additionally, download our preprocessed train/val split files:

Place the downloaded files in the kitti_360/ folder.

After extraction and copying of the perspective images, one should obtain the following file structure:

── kitti_360

├── 2013_05_28_drive_train_frames_full.txt

├── 2013_05_28_drive_val_frames_full.txt

├── calibration

│ ├── calib_cam_to_pose.txt

│ └── ...

├── data_2d_raw

│ ├── 2013_05_28_drive_0000_sync

│ └── ...

├── data_2d_semantics

│ └── train

│ ├── 2013_05_28_drive_0000_sync

│ └── ...

└── data_2d_test

├── 2013_05_28_drive_0008_sync

└── 2013_05_28_drive_0018_sync

Before running training or evaluation, update the dataset path in the configuration file:

- Open

cfg/pdcast_kitti360.yaml - Replace

<PATH_TO_KITTI360_DATASET>with the path to your KITTI-360 dataset root directory

To train PDcast on KITTI-360:

-

Open

scripts/train.shand fill in the placeholder values:<CONDA_ENV>: Your conda environment name (e.g.,pdcast)<GPU_IDS>: GPU IDs to use (e.g.,0,1,2,3for 4 GPUs)<NUM_GPUS>: Number of GPUs (e.g.,4)<MASTER_PORT>: Port for distributed training (e.g.,22019)<RUN_NAME>: Name for your training run (e.g.,pdcast_kitti360_exp1)<PROJECT_ROOT>: Absolute path to this repository<CONFIG_FILE>: Config filename (e.g.,pdcast_kitti360.yaml)

-

Run the training script:

bash scripts/train.sh

To evaluate a trained model:

-

Open

scripts/eval.shand fill in the placeholder values:<CONDA_ENV>: Your conda environment name (e.g.,pdcast)<GPU_ID>: Single GPU ID for evaluation (e.g.,0)<MASTER_PORT>: Port number (e.g.,22019)<RUN_NAME>: Name for evaluation run (e.g.,pdcast_eval)<PROJECT_ROOT>: Absolute path to this repository<CONFIG_FILE>: Config filename (e.g.,pdcast_kitti360.yaml)<CHECKPOINT_PATH>: Absolute path to your model checkpoint (.pth file)

-

Run the evaluation script:

bash scripts/eval.sh

The evaluation will output PDC-Q (Panoptic Depth Consistency Quality) metrics at forecasting horizons of 1, 3, and 5 frames.

We provide pre-trained model weights for PDcast on KITTI-360. The model achieves the following performance on the validation set:

| Dataset | PDC-Q Δ1 | PDC-Q Δ3 | PDC-Q Δ5 | Download |

|---|---|---|---|---|

| KITTI-360 | 0.429 | 0.377 | 0.338 | checkpoint |

PDC-Q (Panoptic Depth Consistency Quality) measures the joint forecasting quality of panoptic segmentation and depth estimation across different temporal horizons (1, 3, and 5 frames ahead).

To use the pre-trained model:

- Download the checkpoint from the link above

- Update the

<CHECKPOINT_PATH>inscripts/eval.shwith the path to the downloaded file - Run evaluation as described in the Evaluation section

For academic usage, the code is released under the GPLv3 license. For any commercial purpose, please contact the authors.

This codebase is built upon and adapted from the open-source

CoDEPS repository:

https://github.com/robot-learning-freiburg/CoDEPS

We sincerely thank the authors for making their implementation publicly available.

This work was funded by the German Research Foundation (DFG) Emmy Noether Program,

grant number 468878300.