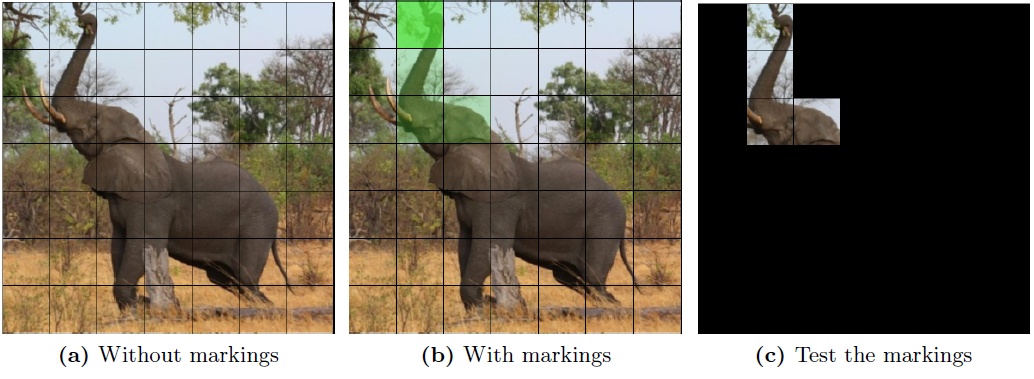

The basic idea for the paper was to compare what humans find important in an image to what five models trained on ResNet1k find important. We built a web tool that was used by 5 + 25 humans to create saliency maps for three selected images (African camel, gorilla and toucan). The human salience maps were then compared to saliency maps created by five ML models. All code for this, for the exception of the web tool, can be found here

k3larra/XAI-F

Folders and files

| Name | Name | Last commit date | ||

|---|---|---|---|---|