UPSTREAM PR #21240: Relax prefill parser to allow space.#1324

UPSTREAM PR #21240: Relax prefill parser to allow space.#1324

Conversation

OverviewImpact: Minor - No performance concerns identified. Function Analysis: 24 modified functions (0.02% of 124,016 total). Changes isolated to chat template parser enhancement and compiler optimizations in auxiliary tools. Binaries Analyzed (15 total):

Total system power consumption: -0.018% (negligible). Function Analysis

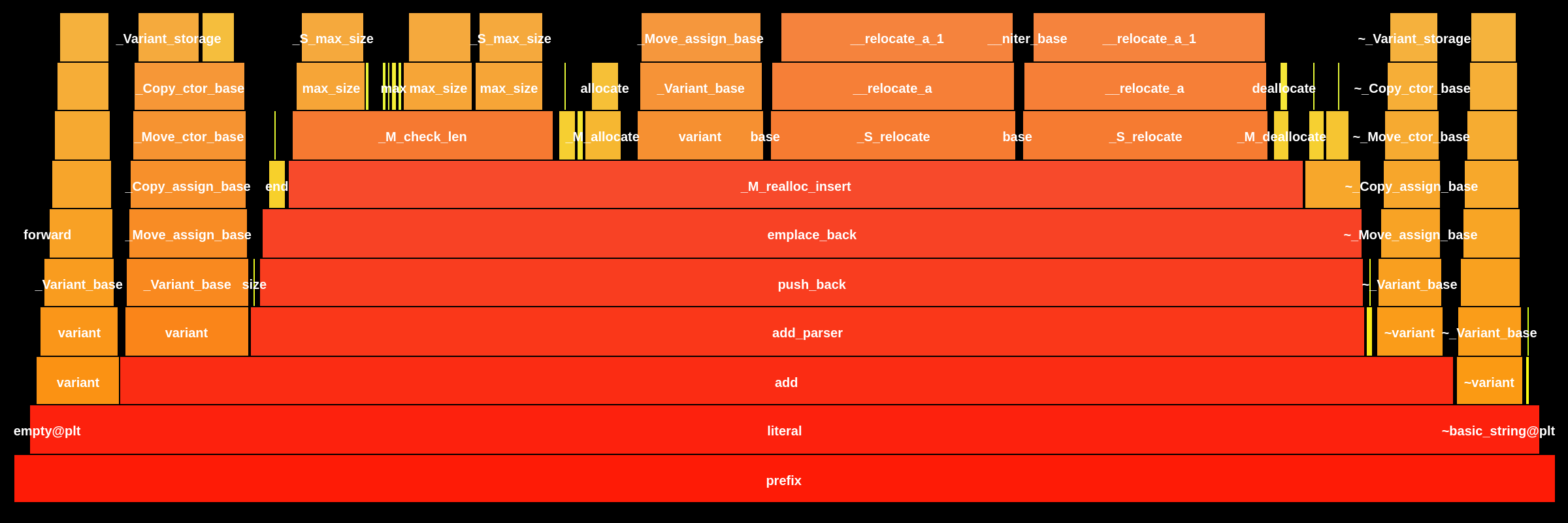

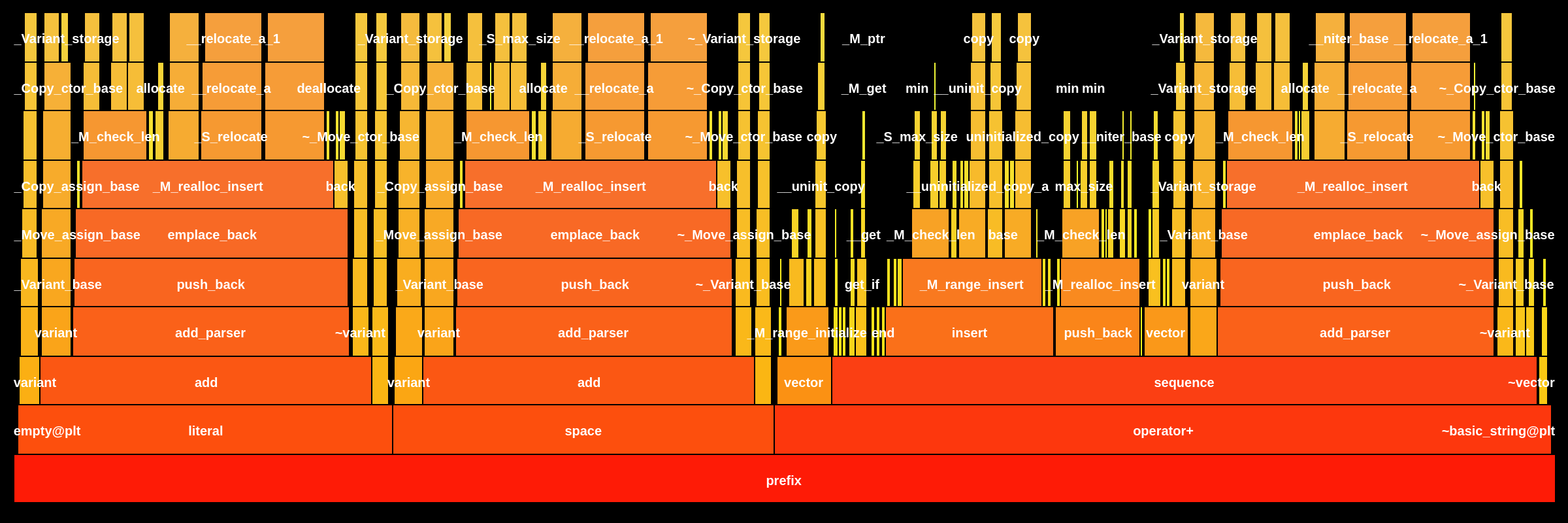

Compiler optimizations (6 functions): Minor compiler artifacts (5 functions): Other analyzed functions saw negligible changes. Flame Graph ComparisonFunction: The target version introduces new call chains for Additional FindingsNo inference impact: Zero changes to 🔎 Full breakdown: Loci Inspector |

126cd1f to

a8215be

Compare

e800934 to

a024d9c

Compare

7638ab4 to

f1b46d5

Compare

Note

Source pull request: ggml-org/llama.cpp#21240

Overview

As in title.

Additional information

Prefill parser was strictly requiring the reasoning marker at the very start of the message, which interfered with models that liked to insert eg. a newline there.

Requirements