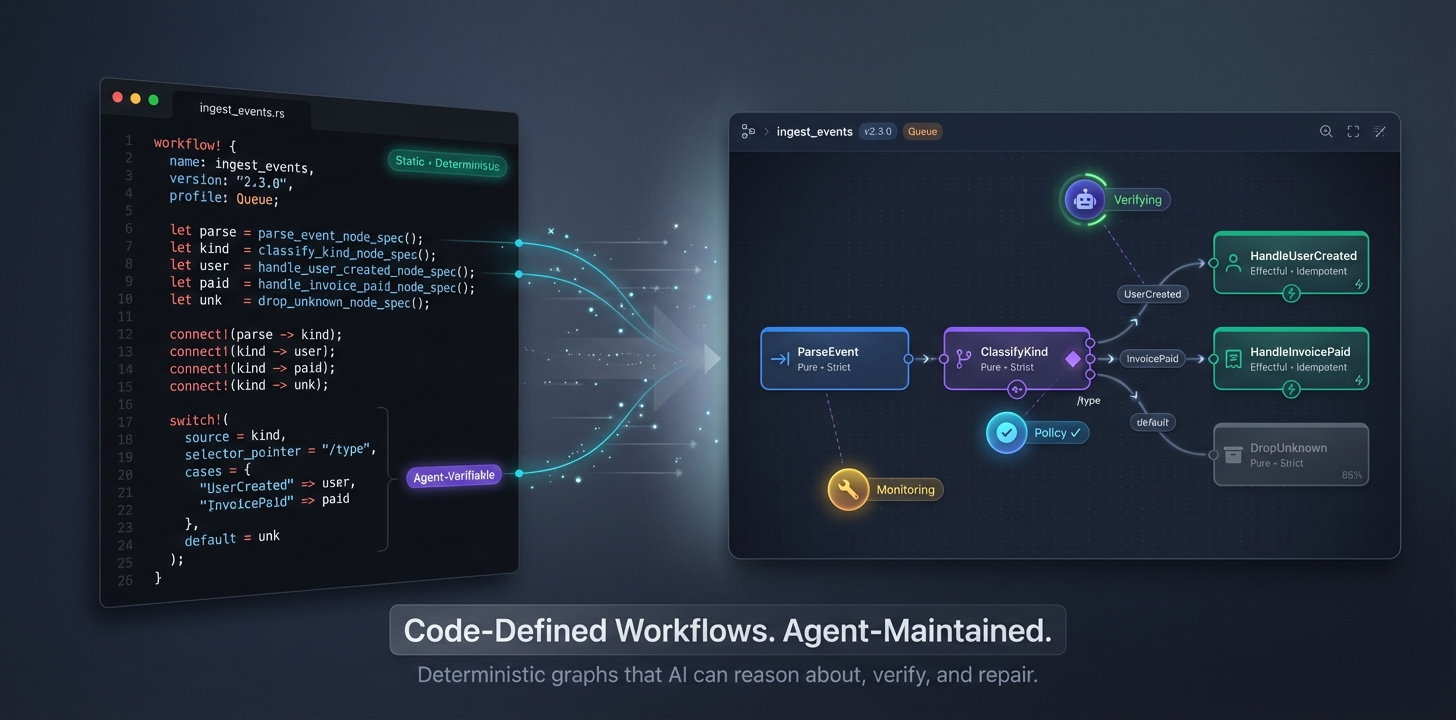

Lattice is a Rust-first workflow automation platform designed to give human and AI authors a typed, policy-aware alternative to low-code orchestrators such as n8n or Temporal JSON definitions. Authors express flows through a macro DSL that expands to:

- Native Rust execution units (

#[def_node],flow!) - A canonical Flow Intermediate Representation (Flow IR) captured as JSON

- Validation diagnostics with stable error codes (e.g.

DAG201,IDEM020) - Execution plans that target multiple hosts (Tokio/Web, Redis queue, Temporal, WASM, Python gRPC)

The workspace provides everything needed to go from macro-authored code to running workflows on local executors, queue workers, or edge WASM packs, with gating harnesses for policy, determinism, and idempotency.

- Code-first: Rust is the source of truth. Macros add structure without hiding control flow so developers and agents retain full language power.

- Typed contracts: All ports, params, capabilities, and policies flow through Flow IR and JSON Schemas, enabling automated tooling and Studio visualisation.

- Determinism & effects: Every node declares its effects (

Pure,ReadOnly,Effectful) and determinism (Strict,Stable,BestEffort,Nondeterministic) so the runtime can enforce caching, retries, and compensations. - Policy & compliance: Capabilities, secrets, and egress are gated at compile time, certification, and runtime. Error codes map directly to policy remediation steps.

- Extensibility: Plugins (WASM, Python) and connector specs allow external teams or agents to extend the platform while preserving sandboxing and evidence trails.

- Macro-authored flows with compile-time metadata and stable validation diagnostics.

flows graph check,flows entrypoints check,flows run local, andflows run serve.- In-process execution via

host-inprocand HTTP serving viahost-web-axum. - Run-scoped workspace capability with native and Workers backends.

- Host-owned connector runtime/bindings substrate with local OAuth2 refresh and service-account JWT providers.

- Runnable connector-owned examples for GitHub Issues and Google Sheets.

- Workerd/Miniflare-backed

host-workerscoverage for durability and workspace semantics.

- Workers parity for richer connector binding/provider execution.

- Connector trigger runtime, inbound verifier providers, and activation lifecycle.

- Larger connector farming surface beyond the first serious Google Sheets slice.

- Broader real-world workflow example coverage across multiple domains.

host-temporalregistry-certimporter-n8nplugin-pythonplugin-wasistudio-backend

/Cargo.toml # Workspace manifest (MSRV 1.90, shared deps)

/impl-docs/ # Contract specs, ADRs, diagnostics, and acceptance stories

/schemas/ # Flow IR JSON Schema + reference artifacts

/crates/ # Primary library, runtime, tooling, and adapters

dag-core/ # Flow IR types, diagnostics, builder utilities

dag-macros/ # Authoring DSL (`#[def_node]`, `flow!`, control surfaces)

kernel-plan/ # Flow IR validators + lowering and policy checks

kernel-exec/ # Runtime for execution plans, resume, and resource overlays

capabilities/, cap-* # Capability typestates + concrete providers

host-inproc/, host-web-axum/, host-workers/, bridge-queue-redis/, host-temporal/ # Runtime + execution bridges

plugin-wasi/, plugin-python/ # Sandbox runtimes for WASM and gRPC plugins (early scaffold)

exporters/ # Flow IR exporters (JSON, DOT, future OpenAPI/WIT)

registry-client/, registry-cert/# Certification + registry publication tooling

connector-spec/, connectors-std/# Connector generation + curated packs

policy-engine/ # CEL-based policy evaluation (scaffold)

testing-harness-idem/ # Idempotency harness utilities

cli/ # `flows` CLI commands

/examples/s1_echo/ # Canonical S1 webhook example using macros

- 0.1 contract specs:

impl-docs/spec/ - Flow IR schema:

schemas/flow_ir.schema.json - User stories & acceptance criteria:

impl-docs/user-stories.md - Diagnostic registry:

impl-docs/error-codes.md

| Crate | Purpose |

|---|---|

dag-core |

Canonical types: Flow IR structs, builder helpers, diagnostics, effects/determinism. |

dag-macros |

Procedural macros expanding Rust nodes/triggers/flows and emitting Flow IR. Includes trybuild suites for diagnostics. |

kernel-plan |

Validation engine enforcing DAG rules, port compatibility, cycle detection, and idempotency preconditions. Produces ValidatedIR. |

kernel-exec |

In-process executor with scheduling, deadlines, resource overlays, and resume integration points. |

exporters |

Flow IR exporters (to_json_value, to_dot) consumed by CLI and Studio. |

flows-cli |

CLI entrypoint for graph validation, entrypoint checks, local execution, and local serving. |

capabilities, cap-* |

Typestate traits and concrete implementations for HTTP, KV, blob, cache, dedupe, clock, workspace, etc. |

host-* |

Host adapters for Axum/web, Cloudflare Workers, Redis queue workers, and future Temporal lowering. |

plugin-* |

Sandboxed plugin runtimes (WASM/Wasmtime + WIT, Python gRPC). |

registry-* |

Publishing, signing, and certification harness integration. |

connector-spec, connectors-std |

YAML schema/codegen for connectors, curated packs. |

testing-harness-idem |

Duplicate injection + evidence capture for idempotency certs. |

- Define nodes & triggers using macros in your crate:

#[def_node(name = "Normalize", effects = "Pure", determinism = "Strict")] async fn normalize(event: Order) -> NodeResult<SanitisedOrder> { ... } #[def_node(trigger, name = "Webhook")] async fn webhook(req: HttpRequest) -> NodeResult<WebhookEvent> { ... }

- Assemble flows with

flow!, usingnode!(...)helpers for bindings. The macro emits both Rust wiring and Flow IR JSON artefacts. - Inspect Flow IR using the CLI:

cargo run -p flows-cli -- graph check --input flow_ir.json --emit-dot

- Validate with

kernel-plan::validate(automatically invoked by CLI) to catch cycles, port mismatches, or missing idempotency keys. - Export DOT or JSON for Studio/agents via

exporterscrate or CLI options.

The examples/s1_echo crate demonstrates a minimal Web profile flow with trigger, inline logic, and responder nodes.

- Serialization:

dag-core::FlowIRderives serde/schemars; schema atschemas/flow_ir.schema.json. - IDs:

FlowIdderived from{name, semver}using UUID v5 (string-encoded for schema friendliness). - Nodes: Capture alias, kind (

Trigger,Inline,Activity,Subflow), port schemas, effects, determinism, idempotency spec, docs. - Edges: Include delivery semantics (

AtLeastOnce,AtMostOnce,ExactlyOnce), ordering, partition key, timeout, buffer policy. - Control surfaces: Document branching (

Switch,If), loops (ForEach,Loop), temporal hints, rate limits, error handlers. - Diagnostics: All lints/errors map to

impl-docs/error-codes.md.dag-coreexportsDiagnosticand registry accessor for consumers.

Validation highlights implemented in kernel-plan::validate:

- Duplicate aliases (

DAG205) - Edge references to unknown nodes (

DAG201) - Cycle detection via DFS (

DAG200) - Port schema compatibility (named schema equality)

- Malformed idempotency declarations (

DAG004)

Additional rules (delivery requirements, capability overlap, policy waivers) are outlined in the RFC and queued for future phases.

- kernel-exec: In-process executor for validated flows, including resource overlays, run identity plumbing, and checkpoint/resume integration points.

- host-inproc: Shared runtime harness used by examples, tests, and higher-level hosts to execute validated flows in-process.

- host-web-axum: Axum adapter that mounts HTTP triggers, handles request facets, streaming responses, deadlines, and cancellation propagation.

- host-workers: Cloudflare Workers adapter with workerd/Miniflare coverage, DO-backed durability substrate, and Workers workspace wiring.

- bridge-queue-redis: Queue bridge for Redis-backed execution lanes; still earlier than the web/native path.

- host-temporal: Present in the workspace, but still scaffold-level rather than product-ready.

- plugin-wasi / plugin-python: Present as extension seams, but still scaffold-level rather than stable public integration surfaces.

- Capabilities: Traits in

capabilitiesensure compile-time enforcement of effect/determinism contracts (e.g., stable reads require pinned resources). Concrete providers live incap-*crates. - Current recommended local stack:

cap-http-reqwest,cap-workspace-fs,cap-blob-opendal(filesystem backend where appropriate), andcap-kv-opendal(SQLite backend where appropriate). - Current recommended Workers stack:

cap-http-workers,cap-workspace-workers,cap-kv-workers, andcap-do-workers. - Important distinction: workspace is run-scoped scratch; blob is durable artifact/object storage. Keep those capabilities separate even if they share a filesystem-backed implementation locally.

- Future SQL note: if Lattice grows a real SQL/DB capability, it should remain separate from

resource::kveven when a KV provider happens to use SQLite internally. - ConnectorSpec: Declarative YAML schema + Rust codegen for connectors, including manifest metadata (effects, determinism, egress, rate limits, tests).

- connectors-std: Umbrella crate re-exporting provider-specific connectors once generated.

- Registry:

registry-clientandregistry-certcoordinate publishing, sigstore signing, SBOM snapshots, determinism/idempotency/test harness runs.

# Validate a Flow IR document (from file)

cargo run -p flows-cli -- graph check --input schemas/examples/s2_site.json

# Validate trigger/capture wiring

cargo run -p flows-cli -- entrypoints check --input schemas/examples/s2_site.json

# Execute a built-in example locally

cargo run -p flows-cli -- run local --example s1_echo --payload '{"value":" Hello "}'

# Serve a built-in example over HTTP

cargo run -p flows-cli -- run serve --example s1_echo --addr 127.0.0.1:8080

# Serve a bundled workflow artifact over HTTP

cargo run -p flows-cli -- run serve \

--bundle /tmp/flow.bundle \

--addr 127.0.0.1:8080

# Execute the connector-owned Google Sheets example via bindings.lock

cargo run -p flows-cli -- run local \

--example connector_google_sheets_local_flow \

--bindings-lock /tmp/google-sheets-live.bindings.lock.jsonStill planned / incomplete:

- queue-first operator flows and richer bridge commands

- registry certification / publish flows

- importer-driven

n8ntranslation path

- Rust 1.90 (workspace MSRV;

mise.tomlcurrently pins 1.93.0 for local tooling) misefor the repo-managed task/toolchain entrypoints- Optional: Redis (queue profile), Wasmtime (plugin host), Temporal dev server (later phase)

# Fast local validation

mise run validate

# Native + wasm validation + secret scan (CI-shaped aggregate)

mise run validate-ci

# Build core crates directly

cargo check -p dag-core

cargo check -p dag-macros

cargo check -p kernel-plan

cargo check -p flows-cli

# Run macro UI tests (requires a writeable target dir; use env var to sidestep sandbox limits)

CARGO_TARGET_DIR=.target cargo test -p dag-macros --test trybuild

# Execute canonical examples

cargo test -p example-s1-echo

cargo run -p flows-cli -- run local --example s1_echo --payload '{"value":" Hello "}'Note: When running tests inside sandboxed environments (e.g., the Codex CLI harness), set a workspace-local

CARGO_TARGET_DIRto avoid cross-device link errors:CARGO_TARGET_DIR=.target cargo test ....If you work with encrypted private docs locally, keep

fnox.tomluntracked and start fromfnox.example.tomlrather than committing a real secret-manager config.

Implementation is organised into discrete phases (impl-docs/impl-plan.md):

- Phase 0: Workspace scaffold, linting, diagnostic registry (completed here).

- Phase 1: Core types/macros/IR/validator/exporters/CLI (partially implemented).

- Phase 2: In-process executor + Web host + inline caching.

- Phase 3: Queue profile, Redis dedupe/cache, idempotency harness.

- Phase 4: Registry, certification harness, connector spec infrastructure.

- Phase 5: Plugin hosts (WASM, Python) and capability extensions.

- Phase 6: WASM edge deployment + n8n importer.

- Phase 7+: Automated connector farming, Studio backend polish.

Each phase has explicit exit criteria, test suites, and CLI milestones to ensure incremental value delivery.

- Diagnostics carry structured metadata: code, subsystem, severity, message.

- Consumers retrieve the registry via

dag_core::diagnostic_codes(). - Validation and macro errors should use the canonical codes to enable consistent remediation playbooks and agent automation.

- Runtime diagnostics (e.g.,

RUN030,TIME015) will be emitted through the kernel runtime and surfaced in CLI/Studio once implemented.

Outlined in the RFC (impl-docs/rust-workflow-tdd-rfc.md, §16):

- Unit: Macro expansion, capability typestates, Flow IR serialization, validator rules.

- Property: Cycle detection, partition semantics, idempotency keys.

- Golden: Example flows (S1 echo, S2 SSE site) compiled and exported.

- Integration: HTTP runtime, queue dedupe & spill, Temporal workflows, plugin hosts.

- Certification harnesses: Determinism replay, idempotency duplicates, policy evidence.

Current repository includes unit/trybuild coverage for macros, Flow IR builder tests in dag-core, kernel-plan validation tests, exporter smoke tests, workerd/Miniflare coverage for host-workers, and runnable connector-owned example packages.

- Expand the real-world example suite across multiple domains, not just substrate demos.

- Harden capability ergonomics for KV/blob/workspace-first authoring patterns.

- Improve Workers parity for connector bindings, auth providers, and connector-bound flows.

- Grow the connector ecosystem beyond GitHub Issues and Google Sheets, especially into richer trigger/webhook families.

- Land clearer stability tiers for scaffold vs preview vs supported public surfaces.

- Continue importer, registry certification, plugin, and studio work from their current scaffold baselines.

Refer to impl-docs/impl-plan.md and impl-docs/surface-and-buildout.md for detailed sequencing.

- Ensure your Rust toolchain is at least 1.90.

- Run formatting (

cargo fmt) and linting (cargo clippy -- -D warnings). - Execute relevant

cargo check/cargo testcommands (setCARGO_TARGET_DIR=.targetif needed). - Adhere to the diagnostic registry when adding new validations or lints.

- Update documentation (README,

impl-docs, schemas) alongside code changes. - Review

AGENTS.mdfor contributor guidelines tailored to agent-assisted workflows.

For large features, coordinate across phases to keep milestones achievable and to avoid bypassing gating harnesses (policy, certification, importer SLOs).

impl-docs/rust-workflow-tdd-rfc.md: Full technical design (macros, IR, runtime, policy, importer).impl-docs/user-stories.md: Scenario-driven requirements (S1–S8 and beyond).AGENTS.md: Contributor guidelines for human/agent collaboration.schemas/examples/etl_logs.flow_ir.json: Reference Flow IR example matching schema, validators, and exporters.crates/dag-macros/tests/: Trybuild suites for DSL diagnostics.crates/kernel-plan/src/lib.rs: Validation implementation & tests (great starting point for additional rules).

Lattice is early in its implementation, but the scaffolding above lays the foundation for a robust, typed, policy-aware workflow engine that balances code-first ergonomics with the automation opportunities AI agents demand. Dive into the examples, run the CLI, and extend the crates to bring the next phases to life. Happy building!